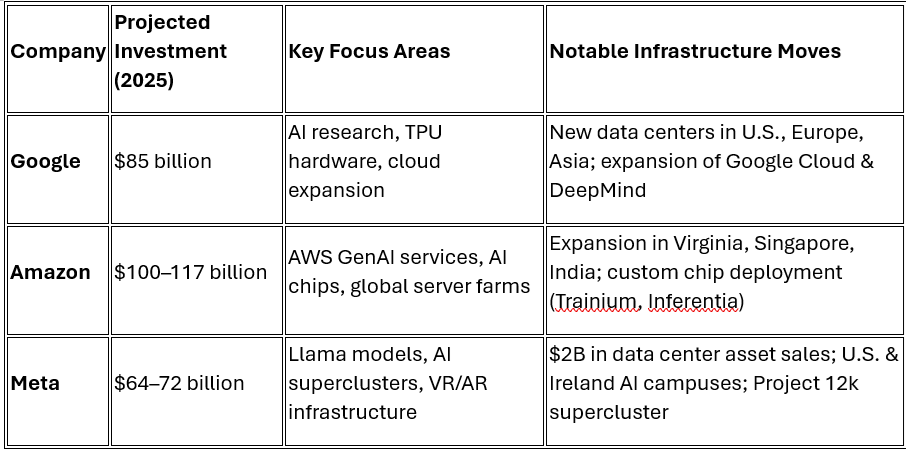

In a landmark year for artificial intelligence, tech titans Google, Amazon, and Meta are investing a combined $250+ billion into AI infrastructure, marking the largest coordinated capital expenditure push in tech history. Google is expanding its cloud empire and TPU hardware to meet surging demand for Gemini-powered AI services. Amazon, the largest spender, is fueling AWS with custom AI chips and vast global server farms. Meanwhile, Meta is building one of the world’s most powerful AI superclusters to support its LLaMA models and immersive tech vision. While these investments promise massive leaps in innovation, they also raise critical concerns about energy use, water stress, and long-term sustainability. Together, these companies are not just building AI tools, they're constructing the very foundation of our AI-powered future.

Google: $85 Billion to Cement AI Dominance

In 2025, Google increased its capex forecast to $85 billion, marking one of the largest annual infrastructure investments in its history. The bulk of this capital is being funneled into:

- Data center expansion: Google is rapidly building and upgrading data centers in Oregon, Iowa, and Singapore, integrating carbon-free energy as part of its 2030 sustainability pledge.

- Custom hardware: Continued development and deployment of its in-house Tensor Processing Units (TPUs) to power Gemini models and support AI/ML workloads in Google Cloud.

- AI model scaling: DeepMind’s flagship Gemini and Med-Gemini models are pushing compute limits, requiring highly efficient and expansive infrastructure.

- Cloud services: Google Cloud’s Vertex AI platform, a core enterprise AI tool, is scaling to meet the surge in generative AI demand globally.

Google executives have framed this spending not just as an arms race, but as a long-term bet on becoming the most carbon-efficient and intelligent AI cloud provider.

Amazon: $100–117 Billion – Powering the AI Backbone via AWS

Amazon is expected to invest between $100 and $117 billion in 2025, the largest of any tech company this year. Nearly all of this is directed toward its AWS division, which remains the backbone of most enterprise-grade AI services.

- Data center expansions: Projects in Virginia, Ohio, South Africa, and the Asia-Pacific regions are being scaled to support generative AI workloads, including massive GPU clusters.

- Custom AI chips: Amazon continues to roll out Trainium and Inferentia chips, which power cost-effective, low-latency AI model training and inference within AWS.

- AI enterprise integrations: AWS is integrating LLMs into cloud-native tools like SageMaker, Bedrock, and Q, targeting business use cases in healthcare, finance, and retail.

- Sustainability efforts: Amazon claims it hit 100% renewable energy usage in 2023, though critics note a lack of transparency on real-world energy sourcing.

Amazon’s strategy is clear: dominate enterprise AI cloud workloads by combining infrastructure, tools, and vertical integrations—all at massive scale.

Meta: $64–72 Billion – AI Superclusters and Mixed Reality Integration

Meta has raised its 2025 capital expenditure forecast to $64–72 billion, up from $40 billion just two years ago. Much of this funding supports CEO Mark Zuckerberg’s dual vision of AI advancement and immersive computing.

- AI superclusters: Meta is building its “12k AI supercluster”, a massive compute complex equipped with 24,000 GPUs, expected to rival Google’s TPU clusters.

- Model development: Meta’s LLaMA 3 and 3.5 open-source models require significant infrastructure, especially for fine-tuning and personalization tasks in messaging apps and workplace tools.

- Data center optimization: In a strategic move, Meta announced a $2 billion asset sale of legacy data centers to cut costs and partner with third-party operators to accelerate growth.

- AR/VR investments: Infrastructure is also being directed toward Reality Labs, fueling projects like Quest and the metaverse, all increasingly powered by AI.

Despite lagging behind OpenAI and Google in product deployment, Meta is betting big on AI-native hardware, personalized agents, and long-term compute scaling.